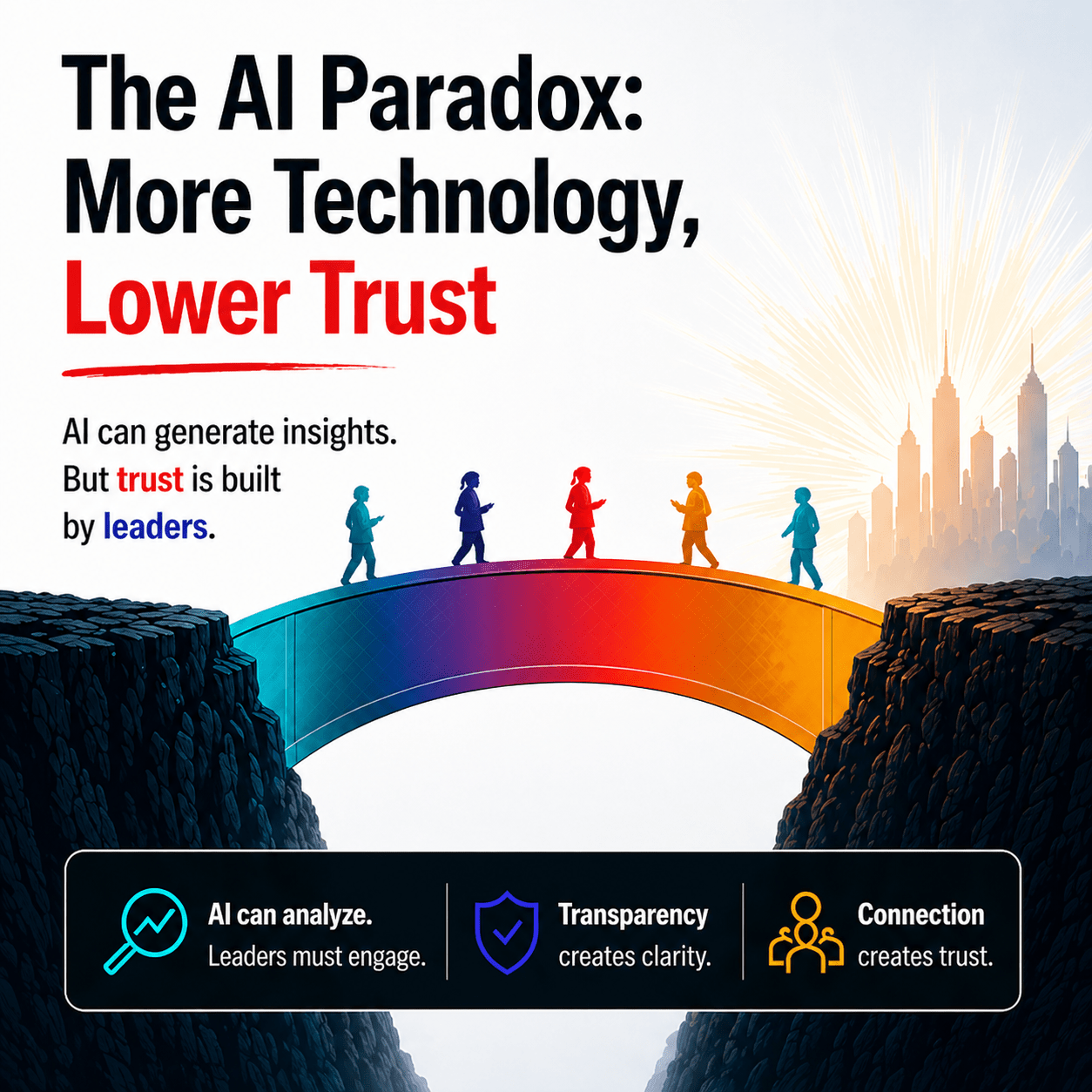

We are living in one of the most extraordinary moments in business history. Artificial intelligence is no longer a future concept, it is embedded in how organizations hire, promote, forecast, engage customers, and make decisions. From talent analytics to generative AI copilots, the promise is clear: faster insights, better decisions, and greater efficiency.

And yet, something unexpected is happening.

As technology accelerates, trust is declining.

This is the paradox leaders now face: the more organizations rely on AI to drive decisions, the more employees begin to question those decisions and the people behind them.

Why This Matters Now

The urgency is real.

According to Edelman’s Trust Barometer, trust in institutions, including business leadership, has been under pressure for years. Employees are increasingly questioning whether decisions are fair, transparent, and made with their best interests in mind.

At the same time, PwC research shows that while most CEOs believe AI will fundamentally reshape their organizations, far fewer believe their workforce fully trusts how it is used.

And McKinsey & Company reports that although AI adoption is accelerating rapidly, employee skepticism around fairness, transparency, and job impact remains one of the biggest barriers to scaling it successfully.

So, we are left with a tension:

- Organizations are investing heavily in AI

- Leaders are pushing for faster adoption

- Employees are quietly questioning both

This is not a technology gap. It is a trust gap.

The Human Reaction to Algorithmic Decisions

AI doesn’t just change how decisions are made. It changes how decisions are experienced.

When a leader decides, employees can interpret intent. They can ask questions. They can engage in dialogue.

When an algorithm is involved, the experience shifts. It can feel distant. Final. Unquestionable.

Research from Harvard Business School shows that people are significantly less likely to trust decisions when they don’t understand how they were made, even when those decisions are more accurate.

This is the heart of the paradox. Because trust is not built on accuracy alone.

Trust is built on understanding, inclusion, and perceived fairness.

Studies published in Nature Human Behavior reinforce this, showing that people are more likely to reject algorithmic decisions when they:

- Cannot explain the outcome

- Feel reduced to data points

- Believe human judgment has been removed

Even good AI decisions can feel wrong when they lack human context.

Why More Technology Can Lead to Less Trust

There are five underlying dynamics driving this shift.

1. The Black Box Effect

AI systems are often difficult to explain. Even leaders may not fully understand how outputs are generated. When leaders cannot clearly articulate decisions, credibility weakens.

2. Speed Without Inclusion

AI accelerates decision-making, but humans need time to process and align. Research from Deloitte shows transformation fails when people feel excluded from the journey.

3. Objectivity vs. Humanity

AI brings objectivity, but people want to be seen beyond metrics. When individuals feel reduced to a score, engagement drops.

4. Fear of Hidden Bias

Research from MIT has shown that AI systems can replicate historical biases. Employees are aware of this, and it shapes their trust.

5. Loss of Agency

Studies from Stanford University highlight that perceived autonomy is a key driver of trust. When decisions feel automated, people feel a loss of control.

The Leadership Challenge Has Fundamentally Changed

Leadership today is no longer just about making good decisions. It is about making decisions that people understand, believe in, and feel part of.

Leaders are now required to:

- Translate AI insights into human language

- Balance data with judgment

- Address emotional responses to automation

- Create space for dialogue and challenge

This is not a technical evolution. It is a human evolution of leadership.

The Human Edge: The Trust Bridge in AI Transformation

AI can provide insight. But it cannot create trust. Trust is built in the space between data and dialogue.

At Human Edge, we see this as the integration of head, heart, and gut:

- Head: Using AI and data to inform decisions

- Heart: Understanding the human impact

- Gut: Applying judgment, ethics, and intuition

AI strengthens the head. But trust is built through the heart and gut. When leaders rely only on data, they create distance. When they integrate humanity, they create a connection.

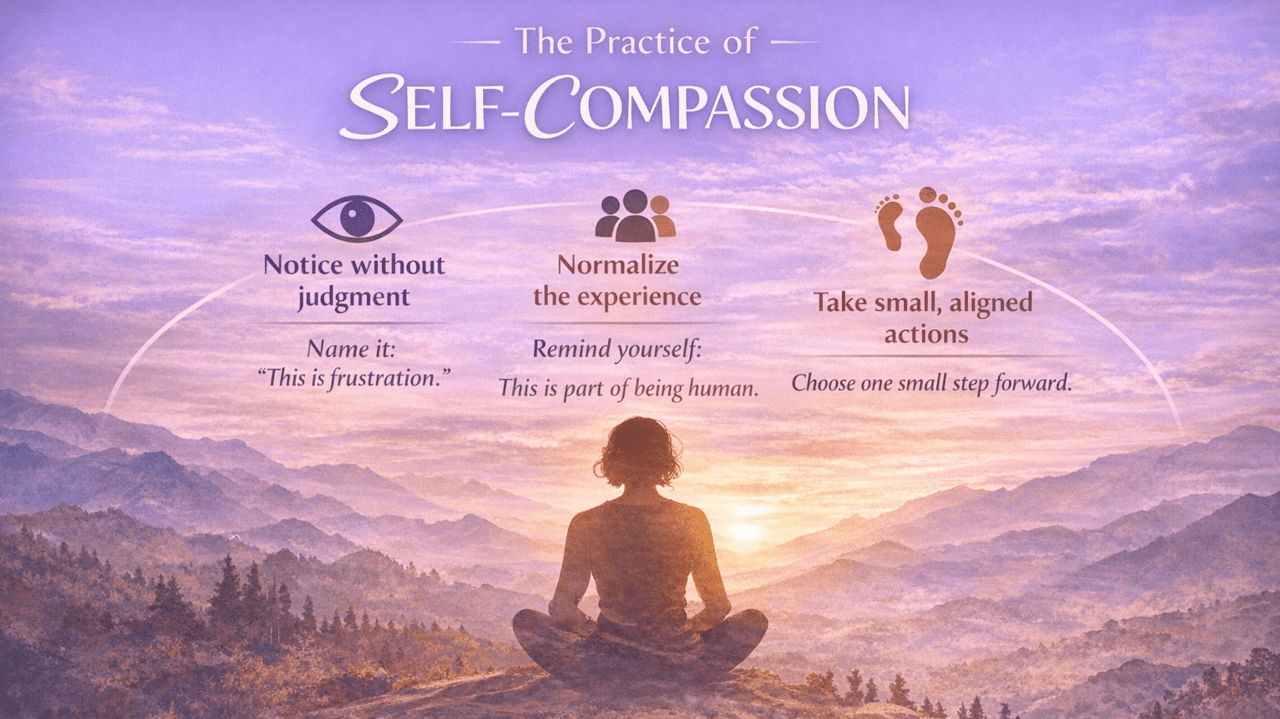

Psychological Safety: The Foundation of Adoption

One of the most consistent findings in transformation research is this:

People do not resist change. They resist feeling unsafe in change. Amy Edmondson’s work on psychological safety shows that when people feel safe to speak up, question, and challenge, engagement and performance rise.

In AI transformation, this becomes critical. If employees do not feel safe to question AI, they will not trust it.

They will comply but not commit. And compliance never drives real transformation.

Leaders must create environments where it is safe to say:

- “I don’t understand this decision.”

- “I’m concerned about fairness.”

- “Can we challenge this?”

That is where trust begins.

Transparency: Turning AI Into a Conversation

Transparency is not about explaining every technical detail. It is about creating clarity and inclusion.

Research from Accenture shows that organizations that are transparent about AI use see significantly higher trust and adoption.

Leaders can build transparency by explaining:

- Why AI is being used

- How it impacts people

- Where human judgment still applies

- What safeguards are in place

This shifts the narrative from: “The system decided.” To:

“Here is how we are using this—and here is where we, as leaders, are accountable.”

And that shift is everything.

From Implementation to Adoption

Many organizations focus on implementing AI. But implementation is technical. Adoption is human.

Research from Bain & Company shows that transformation succeeds when employees feel a sense of ownership and involvement. If AI is imposed, it is resisted. If it is co-created, it is embraced.

What Leaders Must Do Now

Three shifts are essential:

1. From Certainty to Curiosity

Leaders do not need all the answers, but they must invite questions.

2. From Control to Co-Creation

AI should not be rolled out to people. It should be shaped with them.

3. From Data-Driven to Human-Centered

Data informs decisions. Humans create meaning.

How to turn AI insights into trusted, human decisions

1. Translate AI Insights into Human Language

From data → meaning

Most leaders stop at what the data says. Trust is built when you explain what it means for people.

How to do it:

- Use the “So What / Now What” rule So what does this insight mean for us? Now what will we do differently?

- Tell the story behind the data Instead of: “The model predicts a 20% drop in performance.” Say: “This suggests our current way of working may be overloading the team—here’s where we need to adjust.”

- Make it relatable Anchor insights in real situations that employees recognize

If people can’t relate to it, they won’t trust it.

2. Balance Data with Judgment

From algorithm → accountability

AI should inform decisions, not replace leadership responsibility.

How to do it:

- Be explicit about where judgment applies “This is what the data suggests. Here’s where I’m applying my judgment.”

- Stress-test the output Ask: What might this be missing? Look for context that the model cannot see (culture, timing, individual nuance)

- Own the decision Never hide behind: “The system decided.” Replace with: “We used this insight—and here’s the decision we are making.”

Trust increases when leaders stay visibly accountable.

3. Address Emotional Responses to Automation

From logic → empathy

AI triggers fear—loss of control, relevance, fairness. Ignoring this is where trust breaks.

How to do it:

- Name what people are feeling “I know there may be concerns about how this impacts roles or decisions”

- Normalize the reaction Make it clear that skepticism is valid—not resistance

- Create space for concerns Ask: “What worries you about this?” Listen without correcting or defending immediately

- Link to purpose Show how AI supports people—not replaces them

People don’t resist AI. They resist what it might mean for them.

4. Create Space for Dialogue and Challenge

From rollout → co-creation

Adoption happens when people feel part of the change—not subject to it.

How to do it:

- Shift from telling → asking “How do you see this working in your area?” “Where could this go wrong?”

- Invite challenge explicitly “If you disagree with how we’re using this, I want to hear it.”

- Use small group dialogues Psychological safety increases in smaller, more intimate settings

- Close the loop Show how feedback influenced decisions

The Real Opportunity

The AI paradox is not just a challenge. It is an invitation. An invitation to evolve leadership. Because the organizations that will succeed are not those with the most advanced AI.

They are the ones with the highest levels of trust. AI will continue to accelerate. But trust will determine whether it truly transforms. And trust is built, not through technology, but through leadership.

Human Edge Perspective

At Human Edge we help leaders bridge this gap, integrating data, self-awareness, and human-centered leadership to build trust at scale.

Because the future will not be led by AI alone.

It will be led by leaders who know how to make AI human.

Human Edge is a global leader in human‑centric leadership assessment and development, empowering individuals, teams, and organizations to unlock their full potential. Guided by science and driven by empathy, Human Edge transforms behavioral insight into practical, personalized growth experiences that help leaders show up with authenticity, clarity, and purpose.

Founded in 2017, Human Edge brings together experts in psychometrics, psychology, instructional design, and leadership development to deliver evidence-based solutions that create measurable impact. Through innovative products like the suite of CORE assessments, experiential learning modules, and integrated coaching, Human Edge supports leaders and experts across life sciences, FMCG, industrial, and technology sectors, achieving a 94% client retention rate and transforming more than 10,000 leaders worldwide.

With over 25,000 assessments completed and a growing global partner ecosystem, Human Edge is pioneering a new standard for humanistic leadership in an era shaped by AI and constant change. Its mission is to elevate human potential through deeply personalized, science‑backed development that fuels sustainable growth, stronger teams, and meaningful performance outcomes.